Domain-Specific Fine-Tuning of LLMs for Elevator Emergency Response Systems

DOI:

https://doi.org/10.58190/ijamec.2026.165Keywords:

Edge AI, Elevator Safety, Fine-Tuning, Large Language Models, QLoRAAbstract

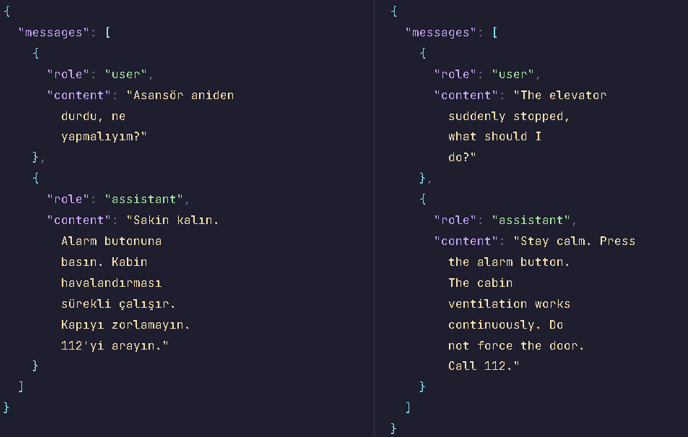

Large Language Models (LLMs) have demonstrated remarkable capabilities in natural language understanding and generation. However, their application in safety-critical domains such as elevator emergency response requires domain-specific knowledge and reliable performance, particularly when deployed as offline edge AI systems with constrained computational resources. This study presents fine-tuning of the Gemma-2-9B-Instruct model using Quantized Low-Rank Adaptation (QLoRA) for Turkish elevator emergency scenarios, targeting deployment on embedded touchscreen control panels inside elevator cabins. Unlike cloud-based systems leveraging internet connectivity for retrieval, our edge deployment operates entirely offline, making fine-tuning essential for encoding domain knowledge directly into model parameters. We developed a specialized Turkish dataset containing 1,155 question-answer pairs covering diverse emergency situations. Our evaluation demonstrates significant improvements: ROUGE-1 scores increased from 0.259 to 0.317 (22.49%), BLEU improved by 218.11%, and hallucination rates reduced from 54% to 22% while achieving 49% faster inference. The resulting 4.8GB quantized model runs entirely on embedded hardware without network dependencies, providing immediate, reliable emergency guidance. These results validate parameter-efficient fine-tuning for safety-critical edge AI applications.

Downloads

References

[1] A. Vaswani et al., "Attention is all you need," Advances in neural information processing systems, vol. 30, 2017.

[2] T. Brown et al., "Language models are few-shot learners," Advances in neural information processing systems, vol. 33, pp. 1877-1901, 2020.

[3] L. Ouyang et al., "Training language models to follow instructions with human feedback," Advances in neural information processing systems, vol. 35, pp. 27730-27744, 2022.

[4] H. Touvron et al., "Llama: Open and efficient foundation language models," arXiv preprint arXiv:2302.13971, 2023.

[5] G. Team et al., "Gemma 2: Improving open language models at a practical size," arXiv preprint arXiv:2408.00118, 2024.

[6] Z. Ji et al., "Survey of hallucination in natural language generation," ACM computing surveys, vol. 55, no. 12, pp. 1-38, 2023.

[7] A. Conneau et al., "Unsupervised cross-lingual representation learning at scale," in Proceedings of the 58th annual meeting of the association for computational linguistics, 2020, pp. 8440-8451.

[8] P. Lewis et al., "Retrieval-augmented generation for knowledge-intensive nlp tasks," Advances in neural information processing systems, vol. 33, pp. 9459-9474, 2020.

[9] E. J. Hu et al., "Lora: Low-rank adaptation of large language models," ICLR, vol. 1, no. 2, p. 3, 2022.

[10] T. Dettmers, A. Pagnoni, A. Holtzman, and L. Zettlemoyer, "Qlora: Efficient finetuning of quantized llms," Advances in neural information processing systems, vol. 36, pp. 10088-10115, 2023.

[11] J. Lee et al., "BioBERT: a pre-trained biomedical language representation model for biomedical text mining," Bioinformatics, vol. 36, no. 4, pp. 1234-1240, 2020.

[12] I. Chalkidis, M. Fergadiotis, P. Malakasiotis, N. Aletras, and I. Androutsopoulos, "LEGAL-BERT: The muppets straight out of law school," arXiv preprint arXiv:2010.02559, 2020.

[13] I. Beltagy, K. Lo, and A. Cohan, "SciBERT: A pretrained language model for scientific text," arXiv preprint arXiv:1903.10676, 2019.

[14] E. Alsentzer et al., "Publicly available clinical BERT embeddings," in Proceedings of the 2nd clinical natural language processing workshop, 2019, pp. 72-78.

[15] D. Araci, "Finbert: Financial sentiment analysis with pre-trained language models," arXiv preprint arXiv:1908.10063, 2019.

[16] P. Zare, "Enhancing maintenance strategies for elevators through fine-tuning large language models," 2024.

[17] K. Chakma, "Evaluating enterprise product recommendation chatbot using LLM: the case of easy selection," 2025.

[18] C.-Y. Lin, "Rouge: A package for automatic evaluation of summaries," in Text summarization branches out, 2004, pp. 74-81.

[19] K. Papineni, S. Roukos, T. Ward, and W.-J. Zhu, "Bleu: a method for automatic evaluation of machine translation," in Proceedings of the 40th annual meeting of the Association for Computational Linguistics, 2002, pp. 311-318.

[20] S. Banerjee and A. Lavie, "METEOR: An automatic metric for MT evaluation with improved correlation with human judgments," in Proceedings of the acl workshop on intrinsic and extrinsic evaluation measures for machine translation and/or summarization, 2005, pp. 65-72.

[21] T. Zhang, V. Kishore, F. Wu, K. Q. Weinberger, and Y. Artzi, "Bertscore: Evaluating text generation with bert," arXiv preprint arXiv:1904.09675, 2019.

[22] T. Wolf et al., "Transformers: State-of-the-art natural language processing," in Proceedings of the 2020 conference on empirical methods in natural language processing: system demonstrations, 2020, pp. 38-45.

[23] T. Dettmers, M. Lewis, S. Shleifer, and L. Zettlemoyer, "8-bit optimizers via block-wise quantization," arXiv preprint arXiv:2110.02861, 2021.

Downloads

Published

Issue

Section

License

Copyright (c) 2026 International Journal of Applied Methods in Electronics and Computers

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.