Deep Learning-Based Lung Cancer Classification and Grad-Cam++ With Lime-Supported Explainability Analysis

DOI:

https://doi.org/10.58190/ijamec.2026.164Keywords:

Explainable artificial intelligence, Lung cancer , Grad-CAM++, LIME, Medical image analysisAbstract

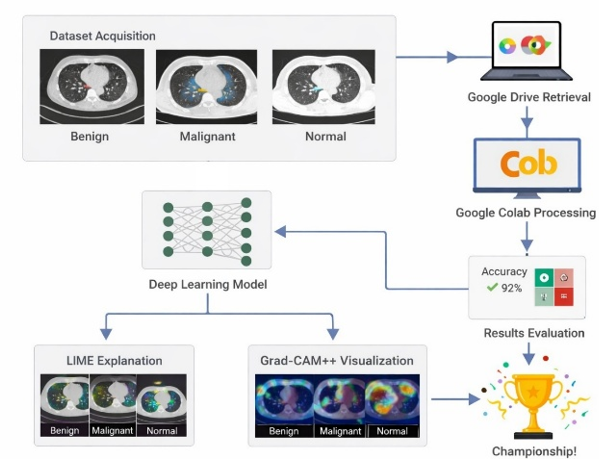

This study aims to classify lung cancer using deep learning-based methods and to interpret the obtained model results using explainable artificial intelligence (XAI) approaches. The dataset used in this study consisted of 1933 chest computed tomography images, which were classified as normal, benign, and malignant. In the classification process, EfficientNet-B4, MobileNetV3-Large, and ResNet50 deep learning architectures were trained using a transfer learning approach, and their performance was evaluated using a 10-fold cross-validation method. The performance of the models was analyzed using accuracy, precision, recall, F1-score, and ROC-AUC metrics. According to the results obtained in the study, the MobileNetV3-Large model showed the highest overall performance with 97.05% accuracy and 99.70% ROC-AUC value. The EfficientNet-B4 and ResNet50 models also provided high and balanced performance values, achieving effective results in lung cancer classification. Grad-CAM++ and LIME methods were used to make model decisions more clinically reliable and interpretable. Grad-CAM++ analyses reveal that the models primarily focus on anatomically significant regions within the lung parenchyma during classification. LIME analyses, on the other hand, have enabled the superpixel-level explanation of local regions that contribute most to classroom decisions. Explainability maps obtained for normal, benign, and malignant classes showed that each class exhibited distinct spatial attention and contribution patterns. In conclusion, this study demonstrates that deep learning-based lung cancer classification offers a more reliable and transparent framework for clinical decision support systems, not only when evaluated with high-performance metrics but also when considered in conjunction with Grad-CAM++ and LIME-supported explainability analyses. The findings demonstrate that explainable artificial intelligence approaches play a significant role in improving model reliability in medical image analysis.

Downloads

References

[1] Q. Xiao, M. Tan, G. Yan, and L. Peng, "Revolutionizing lung cancer treatment: harnessing exosomes as early diagnostic biomarkers, therapeutics and nano-delivery platforms," J Nanobiotechnology, vol. 23, no. 1, p. 232, Mar 21 2025, doi: 10.1186/s12951-025-03306-0.

[2] A. Tabur, "Akciğer Kanserlerinin Epidemiyolojik ve Klinik Özellikleri: Hastane Başvurularının Branş Dağılımlarına Göre İncelenmesi," Journal of İzmir Chest Hospital, pp. 26-35, 2025, doi: 10.14744/igh.2025.79188.

[3] K. L. Hua, C. H. Hsu, S. C. Hidayati, W. H. Cheng, and Y. J. Chen, "Computer-aided classification of lung nodules on computed tomography images via deep learning technique," Onco Targets Ther, vol. 8, pp. 2015-22, 2015, doi: 10.2147/OTT.S80733.

[4] K. Yasufuku, "Early diagnosis of lung cancer," Clin Chest Med, vol. 31, no. 1, pp. 39-47, Table of Contents, Mar 2010, doi: 10.1016/j.ccm.2009.08.004.

[5] J. Ning et al., "Early diagnosis of lung cancer: which is the optimal choice?," Aging (Albany NY), vol. 13, no. 4, p. 6214, 2021. [Online]. Available: https://www.aging-us.com/article/202504/pdf.

[6] N. Gómez León, S. Escalona, B. Bandrés, C. Belda, D. Callejo, and J. A. Blasco, "18F‐Fluorodeoxyglucose Positron Emission Tomography/Computed Tomography Accuracy in the Staging of Non‐Small Cell Lung Cancer: Review and Cost‐Effectiveness," Radiology Research and Practice, vol. 2014, no. 1, p. 135934, 2014, doi: 10.1155/2014/135934.

[7] Y. Doğanşah and M. Köklü, "Use Of The Electromagnetic Spectrum In The Medical Field," Gece Publishing, 2022, ch. 9.

[8] T. Kobayashi et al., "HRCT findings of small cell lung cancer measuring 30 mm or less located in the peripheral lung," Jpn J Radiol, vol. 33, no. 2, pp. 67-75, Feb 2015, doi: 10.1007/s11604-014-0381-2.

[9] J. Chen et al., "Lung cancer diagnosis using deep attention-based multiple instance learning and radiomics," Med Phys, vol. 49, no. 5, pp. 3134-3143, May 2022, doi: 10.1002/mp.15539.

[10] K. Doi, "Computer-aided diagnosis in medical imaging: historical review, current status and future potential," Comput Med Imaging Graph, vol. 31, no. 4-5, pp. 198-211, Jun-Jul 2007, doi: 10.1016/j.compmedimag.2007.02.002.

[11] B. Van Ginneken, C. M. Schaefer-Prokop, and M. Prokop, "Computer-aided diagnosis: how to move from the laboratory to the clinic," Radiology, vol. 261, no. 3, pp. 719-732, 2011.

[12] X. Zhang et al., "Deep learning enhances precision iagnosis and treatment of non-small cell lung cancer: future prospects," Transl Lung Cancer Res, vol. 14, no. 8, pp. 3196-3215, Aug 31 2025, doi: 10.21037/tlcr-2025-187.

[13] L. Tian et al., "Precise and automated lung cancer cell classification using deep neural network with multiscale features and model distillation," Sci Rep, vol. 14, no. 1, p. 10471, May 7 2024, doi: 10.1038/s41598-024-61101-7.

[14] Y. Zhang, Y. Weng, and J. Lund, "Applications of Explainable Artificial Intelligence in Diagnosis and Surgery," Diagnostics (Basel), vol. 12, no. 2, p. 237, Jan 19 2022, doi: 10.3390/diagnostics12020237.

[15] D. Minh, H. X. Wang, Y. F. Li, and T. N. Nguyen, "Explainable artificial intelligence: a comprehensive review," Artificial Intelligence Review, vol. 55, no. 5, pp. 3503-3568, 2022, doi: 10.1007/s10462-021-10088-y.

[16] Z. Sadeghi et al., "A brief review of explainable artificial intelligence in healthcare," arXiv preprint arXiv:2304.01543, 2023, doi: 10.48550/arXiv.2304.01543.

[17] N. Adil, P. Singh, and N. K. Nagwani, "Interpretable Lightweight CNN for Colon and Lung Cancer Classification with LIME Based Explainability," in 2024 IEEE International Conference on Intelligent Systems, Smart and Green Technologies (ICISSGT), 2024: IEEE, pp. 122-127, doi: 10.1109/ICISSGT58904.2024.00034.

[18] X. Wang, Y. Peng, L. Lu, Z. Lu, M. Bagheri, and R. M. Summers, "Chestx-ray8: Hospital-scale chest x-ray database and benchmarks on weakly-supervised classification and localization of common thorax diseases," in Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 2097-2106, doi: 10.1109/CVPR.2017.369.

[19] X. Li, Y. Kao, W. Shen, X. Li, and G. Xie, Lung nodule malignancy prediction using multi-task convolutional neural network (SPIE Medical Imaging). SPIE, 2017, doi: 10.1117/12.2253836.

[20] G. Mohandass, G. Hari Krishnan, D. Selvaraj, and C. Sridhathan, "Lung Cancer Classification using Optimized Attention-based Convolutional Neural Network with DenseNet-201 Transfer Learning Model on CT image," Biomedical Signal Processing and Control, vol. 95, p. 106330, Sep 2024, doi: 10.1016/j.bspc.2024.106330.

[21] M. Sivakumar, S. Chinnasamy, and T. Ms, "An efficient combined intelligent system for segmentation and classification of lung cancer computed tomography images," PeerJ Comput Sci, vol. 10, p. e1802, 2024, doi: 10.7717/peerj-cs.1802.

[22] M. Budati and R. Karumuri, "An intelligent lung nodule segmentation framework for early detection of lung cancer using an optimized deep neural system," Multimedia Tools and Applications, vol. 83, no. 12, pp. 34153-34174, 2024, doi: 10.1007/s11042-023-17791-8.

[23] Y. Chen, Y. Wang, F. Hu, and D. Wang, "A Lung Dense Deep Convolution Neural Network for Robust Lung Parenchyma Segmentation," IEEE Access, vol. 8, pp. 93527-93547, 2020, doi: 10.1109/access.2020.2993953.

[24] M. Masud et al., "Light Deep Model for Pulmonary Nodule Detection from CT Scan Images for Mobile Devices," Wireless Communications and Mobile Computing, vol. 2020, no. 1, pp. 1-8, Jul 3 2020, doi: 10.1155/2020/8893494.

[25] G. Cai et al., "Medical artificial intelligence for early detection of lung cancer: A survey," Engineering Applications of Artificial Intelligence, vol. 159, p. 111577, Nov 8 2025, doi: 10.1016/j.engappai.2025.111577.

[26] P. S. H. Jose, J. E. Sagar, S. Abisheik, R. Nelson, M. Venkatesh, and B. Keerthana, "Leveraging Convolutional Neural Networks and Multimodal Imaging Data for Accurate and Early Lung Cancer Screening," in 2024 International Conference on Inventive Computation Technologies (ICICT), 2024: IEEE, pp. 1258-1264, doi: 10.1109/ICICT60155.2024.10544863.

[27] S. Singh, "Computer-aided diagnosis of thoracic diseases in chest X-rays using hybrid cnn-transformer architecture," arXiv preprint arXiv:2404.11843, 2024, doi: 10.48550/arXiv.2404.11843.

[28] N. Veeramani, A. R. S, S. P. S, S. S, and P. Jayaraman, "NextGen lung disease diagnosis with explainable artificial intelligence," Sci Rep, vol. 15, no. 1, p. 33052, Sep 26 2025, doi: 10.1038/s41598-025-07603-4.

[29] P. N. Megat Ramli, A. N. Aizuddin, N. Ahmad, Z. Abdul Hamid, and K. I. Ismail, "A Systematic Review: The Role of Artificial Intelligence in Lung Cancer Screening in Detecting Lung Nodules on Chest X-Rays," Diagnostics (Basel), vol. 15, no. 3, Jan 22 2025, doi: 10.3390/diagnostics15030246.

[30] Z. Naz et al., "An Explainable AI-Enabled Framework for Interpreting Pulmonary Diseases from Chest Radiographs," Cancers (Basel), vol. 15, no. 1, Jan 3 2023, doi: 10.3390/cancers15010314.

[31] A. Alqhatni, T. Babu, T. R. Mahesh, S. B. Khan, O. Saidani, and M. T. Quasim, "Automated classification and explainable AI analysis of lung cancer stages using EfficientNet and gradient-weighted class activation mapping," Front Med (Lausanne), vol. 12, p. 1625183, 2025, doi: 10.3389/fmed.2025.1625183.

[32] T. H. Talha et al., "An Image Dataset of Advanced Imaging Techniques for Lung Cancer Diagnosis," Mendeley Data, 2025, doi: 10.17632/xxxxx.1.

[33] Y. Unal, E. T. Yasin, T. A. Cengel, and M. Koklu, "Classification of Turkish hazelnut (Corylus colurna L.) varieties: a comparative study of YOLOv8 and fine-tuned vision transformer," Journal of Food Measurement and Characterization, pp. 1-15, 2026, doi: 10.1007/s11694-025-03936-w.

[34] E. T. Yasin et al., "Detecting Driver Fatigue Using Artificial Intelligence on a Realistic Driving Images," Journal of Future Artificial Intelligence and Technologies, vol. 2, no. 4, pp. 648-660, 2026, doi: 10.62411/faith.3048-3719-299.

[35] I. A. Ozkan and M. Koklu, "Skin lesion classification using machine learning algorithms," International Journal of Intelligent Systems and Applications in Engineering, vol. 5, no. 4, pp. 285-289, 2017, doi: 10.18201/ijisae.2017534420.

[36] A. B. Abdusalomov, M. Mukhiddinov, and T. K. Whangbo, "Brain Tumor Detection Based on Deep Learning Approaches and Magnetic Resonance Imaging," Cancers (Basel), vol. 15, no. 16, p. 4172, Aug 18 2023, doi: 10.3390/cancers15164172.

[37] M. M. Saritas, O. Kilci, and M. Koklu, "Evaluation of CNN Models for Multi-Class Gear Fault Detection Using Waveform Images," in Proceedings of International Conference on Intelligent Systems and New Applications, 2025, vol. 3, pp. 31-40, doi: 10.58190/icisna.2025.137.

[38] I. Cinar and M. Koklu, "Identification of rice varieties using machine learning algorithms," Journal of Agricultural Sciences, pp. 9-9, 2022, doi: 10.15832/ankutbd.862482.

[39] F. Haque et al., "An End-to-End Concatenated CNN Attention Model for the Classification of Lung Cancer With XAI Techniques," IEEE Access, vol. 13, pp. 96317-96336, 2025, doi: 10.1109/access.2025.3572423.

[40] M. Koklu, R. Kursun, Y. S. Taspinar, and I. Cinar, "Classification of date fruits into genetic varieties using image analysis," Mathematical Problems in Engineering, vol. 2021, no. 1, p. 4793293, 2021, doi: 10.1155/2021/4793293.

[41] H. H. Aras, Y. Eryeşil, and M. Köklü, "An Explainable Deep Learning Framework for Agtron-Based Coffee Roast Classification Using Grad-CAM," in Proceedings of International Conference on Intelligent Systems and New Applications, 2025, vol. 3, pp. 51-57, doi: 10.58190/icisna.2025.139.

[42] B. Isgor and M. Koklu, "Lightweight Hybrid Model for Bone Fracture Detection Using MobileNetV2 Feature Extraction and Ensemble Learning," Journal of Future Artificial Intelligence and Technologies, vol. 2, no. 3, pp. 521-533, 2025, doi: 10.62411/faith.3048-3719-284.

[43] H. Incekara, I. H. Cizmeci, M. M. Saritas, and M. Koklu, "Classification of almond kernels with optuna hyper-parameter optimization using machine learning methods," Journal of Food Science and Technology, pp. 1-17, Nov 25 2025, doi: 10.1007/s13197-025-06494-7.

[44] İ. H. Çizmeci and H. İncekara, "Stacking Ensemble Based Hybrid Machine Learning Approach for Predicting Obesity Levels," in 2025 7th International Congress on Human-Computer Interaction, Optimization and Robotic Applications (ICHORA), 2025: IEEE, pp. 1-8, doi: 10.1109/ICHORA65333.2025.11017164.

[45] D. Garreau and U. Luxburg, "Explaining the explainer: A first theoretical analysis of LIME," in International Conference on Artificial Intelligence and Statistics, 2020: PMLR, pp. 1287-1296.

[46] M. D. İli and F. Özyurt, "Açiklanabilir Yapay Zeka Yöntemleriyle Mr Görüntülerinden Beyin Tümörü Tespiti," Kahramanmaraş Sütçü İmam Üniversitesi Mühendislik Bilimleri Dergisi, vol. 28, no. 2, pp. 1092-1109, 2025.

[47] R. R. Selvaraju, M. Cogswell, A. Das, R. Vedantam, D. Parikh, and D. Batra, "Grad-cam: Visual explanations from deep networks via gradient-based localization," in Proceedings of the IEEE international conference on computer vision, 2017, pp. 618-626, doi: 10.1109/ICCV.2017.74.

[48] D. Theckedath and R. Sedamkar, "Detecting affect states using VGG16, ResNet50 and SE-ResNet50 networks," SN Computer Science, vol. 1, no. 2, p. 79, 2020, doi: 10.1007/s42979-020-0114-9.

Downloads

Published

Issue

Section

License

Copyright (c) 2026 International Journal of Applied Methods in Electronics and Computers

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.